AI model selection has become more complex. There is no single AI model that works best for every use case. Teams now choose from many options, including open source models and proprietary systems. Each model has different strengths. Some offer better performance, while others focus on lower cost, faster response time, or stronger privacy.

Choosing the right model in 2025 requires careful trade-offs. Cost, latency, privacy, and model accuracy all matter. The number of available models can make the decision difficult for many teams.

Start with a simple approach and expand when needed. This AI model selection guide helps teams evaluate options and choose models that fit their business goals and real product use cases.

What Is AI Model Selection

AI model selection is the process of choosing the right AI model for a machine learning project. Teams review different models and evaluate model capabilities, model characteristics, and model performance. The goal is to find the most appropriate model for specific use cases. A structured approach helps compare candidate models based on accuracy, efficiency, cost, and operational costs. Training data, model complexity, and cost constraints also influence the final model choice.

Model selection becomes more complex as more available models enter the market. Options now include open source models, pretrained models, large language models, and specialized models built for a specific purpose. Some models handle text generation, code generation, sentiment analysis, or visual data, while others focus on reasoning capabilities or complex tasks.

A strong model evaluation process helps teams compare multiple models and mitigate risk. Teams often test simpler models before moving to deep neural networks or hybrid architectures. Fine-tuning, model updates, and context window size also affect model behavior. Careful evaluation helps teams select a model that meets business objectives and delivers superior results in real-world applications.

Key Factors To Consider Before Choosing ML Models

Before you commit to any AI model selection decision, you need to review several factors that determine whether a model will work for your business. These considerations go beyond simple standard scores and require a broader understanding of artificial intelligence software types and uses.

Understanding Your Use Case And Business Goals

Start by outlining your use case. Do you want to automate tasks, analyze data, or build chatbots, or simply adopt smarter software tools to simplify day-to-day work? The answer determines which machine learning model to use. Your model choice should match the model's capabilities to your business goals.

Define what your model needs to do. Tasks include sentiment analysis, code generation, or up-to-the-minute conversation. Clear problem framing helps you narrow options and choose a model that delivers the best model performance, accuracy and efficiency for your specific use cases. Picking the right model depends on your objectives and constraints.

Determine whether your problem is general-purpose or domain-specific. General tasks might work with pretrained models, while specialized models excel in focused areas. Sentiment analysis requires different model characteristics than code generation or retrieval augmented generation systems.

Budget And Resource Constraints

Cost considerations affect every AI model selection decision, especially when comparing AI automation vs traditional automation for different workflows. When building large language models, developers want to maximize model performance under a particular computational and financial budget. Training a model can amount to millions of dollars, so you need to be careful with cost-impacting decisions about model architecture, optimizers and training datasets before committing to a model.

The total training compute of a transformer scales as C∝N×D, where N is the number of model parameters, D is the number of tokens, and C is the fixed compute budget. For a fixed C, N and D are inversely proportional to each other. For small compute budgets, simpler models trained on most of the available data perform better than larger models trained on very little data. For large compute budgets, larger models become better when enough data is available.

Cost structures vary. Context window size increases input processing costs. Multimodal inputs like images or audio add preprocessing and tokenization overhead. Implementing 4-bit quantization reduces deployment costs but introduces performance drops averaging around 2%. Tasks like logical reasoning suffer most at -6.5%, while simpler models for text generation see minimal effect at -0.3%.

Data Availability And Quality Requirements

Data quality affects model performance, reliability, and trustworthiness. Poor data quality can lead to unreliable models that hinder decision-making and predictions. Most AI failures are rooted in poor data quality, not flawed models or tools.

AI-ready data must be representative of the use case and have every pattern, error, outlier, and unexpected emergence needed to train or run an AI model for a specific purpose. High-quality data meets quality standards across several key dimensions: accuracy, completeness, consistency, timeliness and validity.

Different AI techniques have unique data requirements. Proper annotation and labeling are vital, especially for visual data. Data must meet quality standards specific to the AI use case, even if it has errors or outliers. Diversity matters. Include diverse data sources to avoid bias. Maintain transparency about data origins and transformations through proper lineage tracking.

Latency And Performance Needs

Response speed affects user experience and throughput determines scalability. In production generative AI applications, responsiveness is just as important as the intelligence behind the model. Every second of delay can affect adoption, especially in AI-driven automation within SaaS platforms.

Time to first token (TTFT) represents how fast your streaming application starts responding. Output tokens per second (OTPS) indicates how fast your model generates new tokens after it starts responding. End-to-end latency measures the total time from request to complete response.

Geographic distribution plays a big role in application performance. Model invocation latency can vary depending on whether calls originate from different regions, local machines or different cloud providers. Integration patterns affect how users see application performance.

Privacy And Compliance Requirements

Organizations operating in regulated industries like healthcare, finance or government must ensure their models comply with standards like General Data Protection Regulation (GDPR), Health Insurance Portability and Accountability Act (HIPAA), or California Consumer Privacy Act (CCPA). You must choose models that provide robust data protection, secure deployment options and transparency in decision-making processes.

Privacy risks should be assessed and addressed throughout the development lifecycle of an AI system. Organizations should limit the collection of training data to what can be collected lawfully. Data from certain domains should be subject to extra protection and used only in narrowly defined contexts. Security best practices help avoid data leakage and reduce risk.

Types Of AI Models Available For Product Teams

Understanding which types of models exist helps you make better ai model selection decisions and design AI software development for smarter, faster digital products. The model landscape breaks down into several core categories, each with distinct characteristics and trade offs.

Proprietary Models Vs Open Source Models

Proprietary models like GPT-4o, Claude 3.5, and Gemini are owned and operated by companies. You access them via API with usage costs and closed-source code. Think of it as renting a high-performance tool with a locked hood. These models offer enterprise features that include SOC2 compliance, SLAs, and dedicated support teams.

Open source models make their code, weights, or architecture available to the public. Anyone can use, modify, or fine-tune these models, often for free or under flexible licenses. Examples include Meta's LLaMA 3 series and Mistral's models. LLaMA 3.1-70B-Instruct offers 70 billion parameters and is optimized for instruction following tasks.

Text-Only Models Vs Multimodal Models

Text-only models process and generate text alone. Early versions of GPT, BERT, and Claude operated this way. These models face a fundamental constraint: they must rely on human-generated descriptions to comprehend visual, auditory, or spatial concepts.

Multimodal models process text, images, audio, video, and sensor data at once. GPT-4 with Vision can describe images and interpret graphs. Gemini 1.5 Pro and Claude 3 Opus handle multiple data types. Stable Diffusion generates realistic-looking, high-definition images from text prompts.

Multimodal systems develop spatial reasoning capabilities and contextual awareness that neither modality could provide on its own. Enterprise data is multimodal by nature. Business workflows involve PDFs, dashboards, voice calls, and images. A developer debugging a UI issue cannot communicate visual layout problems through text descriptions alone. The model needs to see the actual interface.

Large Foundation Models Vs Small Specialized Models

Foundation models are large deep learning neural networks trained on a broad spectrum of data, capable of performing a wide variety of general tasks. GPT-4 contains 1.76 trillion parameters. These models require substantial resources for training and deployment.

Small language models have fewer parameters and are fine-tuned on a subset of data for particular use cases. Open source SLM such as Mistral 7B contains 7.3 billion model parameters. SLMs require fewer computational resources for training and deployment, which reduces infrastructure costs and enables faster fine-tuning.

SLMs excel in particular domains but struggle compared to LLMs when it comes to general knowledge. The lightweight nature of SLMs makes them ideal for edge devices and mobile applications. They can often run with just the resources available on a single mobile device without needing constant connection to larger resources.

Base Models Vs Fine-Tuned Models

Base models are pre-trained on massive datasets and provide general purpose capabilities such as language understanding, code generation, or vision recognition. They are the foundations for more specialized models. GPT-4, LLaMA, and Stable Diffusion are examples.

Fine-tuned models start from a base model but are trained further on domain data to improve accuracy and relevancy for enterprise workflows. Fine tuning can increase accuracy substantially. Phi-2's accuracy on financial data sentiment analysis increased from 34% to 85%. ChatGPT's accuracy on Reddit comment sentiment analysis improved by 25 percentage points using just 100 examples.

Base models offer rapid deployment and generic solutions. Fine-tuned models deliver domain accuracy and better alignment with enterprise processes. You can structure output in formats like JSON, YAML, or Markdown through fine tuning.

Essential Model Evaluation Metrics And Benchmarks

Assessing models requires more than gut feeling or vendor marketing claims. You need concrete metrics that reveal how different models perform under ground conditions, just as case studies of AI features that increased engagement by 34% rely on measurable outcomes. The right assessment framework helps you select the appropriate model based on data rather than assumptions.

Accuracy And Performance Metrics

Accuracy measures the ratio of correct predictions to total predictions. Precision and recall provide deeper understanding for classification tasks. Precision calculates how many positive predictions were correct. Recall measures how many actual positives the model identified.

F1 score combines precision and recall using harmonic mean. This metric punishes extreme values, so a model must maintain decent precision and recall to achieve high F1. Accuracy alone can be misleading for imbalanced datasets where one class appears rarely. A model predicting negative 100% of the time would score 99% accuracy if positives occur only 1% of the time.

Cost Per Request And Token Pricing

Token pricing affects operational costs at scale. GPT-5.4 charges USD 0.25 per million cached input tokens and USD 15.00 per million output tokens. GPT-5 mini offers a more economical option at USD 0.03 per million cached input tokens and USD 2.00 per million output tokens.

Output tokens cost substantially more than input tokens. The median output to input price ratio sits around 4x. This makes lengthy completions expensive even when inputs are small. GPT-4o Mini handles 10,000 tickets for under USD 10.00 for a support ticket workload with 3,150 input tokens and 400 output tokens. GPT-5.2 Pro costs over 100x more for similar workload.

Response Time And Throughput Considerations

Time to first token (TTFT) measures how quickly your application starts responding. Optimized versions achieved 42.20% reduction in TTFT at median performance and 51.70% reduction at 90th percentile for Claude 3.5 Haiku. Output tokens per second (OTPS) indicates generation speed after the first response. The same optimization delivered 77.34% improvement at the median and 125.50% at 90th percentile.

Latency depends on model type, prompt token count, generated token count, and system load. Reducing the max_tokens parameter decreases latency per request. Streaming returns tokens as they become available instead of waiting for full completion.

Standard Scores You Should Care About

AI standards provide standardized tests to compare model performance. Standard quality varies substantially though. MMLU scored lowest on usability at 5.0 among 24 assessed standards, while GPQA scored much higher at 10.9. Most standards achieve the highest scores at design stage and lowest scores at the implementation.

Standards measure narrow tasks rather than ground scenarios. A model scoring perfectly on standards might struggle with messy user inputs or domain-specific edge cases. Data contamination occurs when test data leaks into training sets and lets models memorize answers instead of solving tasks. Standard saturation happens when models hit near perfect scores and makes comparisons meaningless.

AI Model Selection Process For Startups

Successful AI model selection follows a structured approach rather than random experimentation. Most AI projects fail because they start with technology instead of business needs. Organizations that define requirements upfront achieve positive return on investment within the first year.

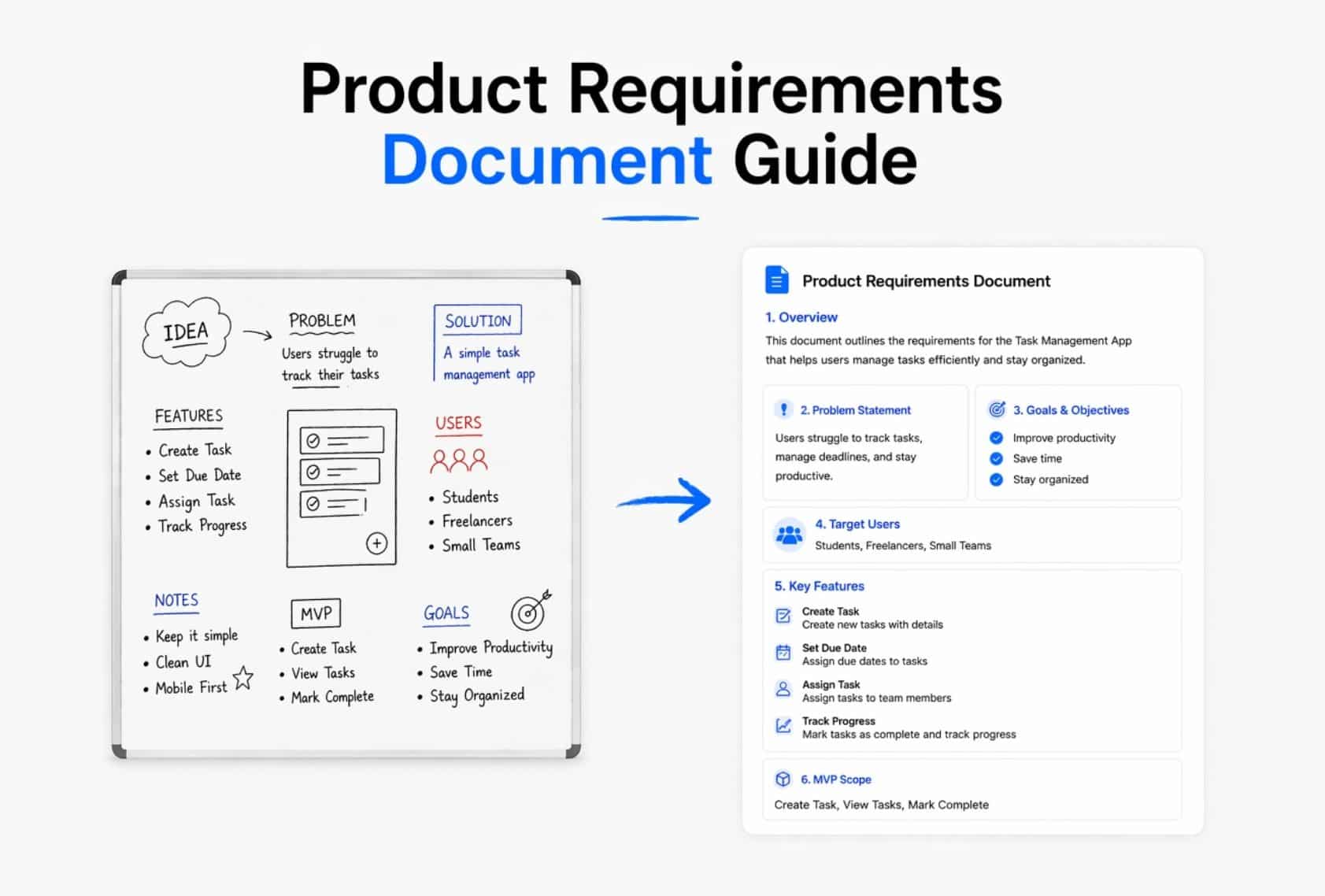

Step 1: Define Your Problem And Success Criteria

Identify specific business problems you need to solve first. What inefficiencies or bottlenecks exist in current practices? Your problem statement should state why machine learning is the right tool and where tech consulting services for modern businesses could accelerate outcomes.

The SMART framework helps you set clear goals. Goals should be specific, measurable, achievable, relevant, and time-bound. Define business metrics like revenue or click-through rate and model metrics like precision or recall. Business metrics matter most since they calculate actual business performance.

Step 2: Identify Candidate Models

Map your problem to specific AI task categories like regression, classification, clustering, or generation. Data governs possible solutions. You need to assess whether you have structured data suited for traditional algorithms or unstructured data that requires specialized models.

Think about constraints upfront. Create a skills matrix for your team and cross-reference it with skills needed to operate each candidate model. Filter models based on cloud compatibility, budget limits, licensing requirements, and core feature needs.

Step 3: Run Small-Scale Tests

Test model output against completed projects first. This helps you learn how to prompt models. Run proof of concept tests lasting 2 to 4 weeks to confirm technical feasibility. Expand to limited pilots with small user groups over 4 to 8 weeks.

Use representative data like production inputs when testing. Include diverse examples that cover common scenarios and edge cases.

Step 4: Evaluate Against Your Metrics

Compare candidate models using predefined evaluation criteria. Track actual costs through token usage and measure end-to-end response times under realistic conditions. Run each evaluation example multiple times since model outputs vary. Worst-case performance indicates reliability floors, so pay attention to it.

Step 5: Make The Final Decision

A scoring matrix transforms subjective priorities into quantifiable scores. Weight technical performance at 40 points, business value at 25 points, implementation feasibility at 20 points, and risk assessment at 15 points. The right model balances quality, cost, and speed while meeting your specific requirements.

How To Build Your AI Tech Stack And Deployment Strategy

Your tech stack determines how well your selected model operates in production. The right combination of frameworks, hosting infrastructure and support systems turns model selection into business value and helps you build a future-proof tech stack for scalable growth.

Choose The Right AI Framework

LangChain guides most startups building applications with large language models. It offers rapid prototyping with 300+ integrations. LlamaIndex specializes in retrieval augmented generation and handles document preprocessing and index optimization on its own. PyTorch provides strong debugging capabilities without steep learning curves if you need custom models that require flexibility. TensorFlow excels at production deployment and scaling in distributed systems. FastAPI wraps models in production-ready APIs with minimal code and generates documentation on its own, and an API-first architecture for scalable systems ensures these components integrate cleanly.

Model Hosting Options For Different Budgets

CPU instances on AWS cost around USD 36.00 per month, while GPU instances run USD 380.00 monthly. Storage expenses remain negligible at USD 0.02 per GB monthly. Managed cloud services handle infrastructure configuration, scaling and maintenance. Teams can focus on AI development. Serverless options charge only what you use. This makes experimentation feasible even when budgets are tight and should align with your broader startup tech stack choices for high growth teams.

Vector Databases And RAG Implementation

Vector databases store embeddings close to source data and simplify data processing pipelines. Aurora PostgreSQL with pgvector extension suits teams invested in relational databases. Amazon Titan Text Embedding v2 produces vectors with 1,024 dimensions. Domain-specific datasets often result in hundreds of millions of embeddings that require the quickest way to search for similarities, so they must fit into your overall tech stack selection strategy for 2026.

Monitoring And Iteration After Deployment

Post deployment monitoring tracks accuracy and reliability without interruption. Model drift occurs when performance degrades due to changing data patterns. Automated monitoring tools detect anomalies early and flag areas that need retraining. Organizations that implement reliable monitoring policies report better performance in revenue growth and cost savings.

How GainHQ Supports AI Model Selection For SaaS Product Teams

AI model selection requires a structured approach. Product teams must compare available models and evaluate model capabilities, model performance, and operational costs. GainHQ helps teams organize this process during AI development. Teams can document candidate models, track model evaluation results, and review model characteristics in one place. This helps teams choose the right AI model for specific use cases and business objectives.

GainHQ also helps teams analyze model choice across multiple models. Product and engineering teams can review trade-offs such as cost, latency, context window, and model complexity. This makes it easier to compare open source models, large language models, and other pretrained models while ensuring your UI/UX design for SaaS products continues to surface AI capabilities in a way users can trust and adopt.

Clear documentation supports better model selection decisions. Teams can track model updates, evaluate model behavior in real-world applications, and ensure the selected model meets quality standards and performance expectations.

FAQs

How Do You Choose The Right AI Model For A Specific Business Use Case?

Start with a clear understanding of your tasks, data, and business objectives. Evaluate candidate models through model evaluation tests that measure accuracy, cost, and performance. Compare different models to determine the most appropriate model for the specific purpose.

Can Simpler Models Deliver Better Results Than Large Language Models?

Yes. Simpler models often deliver strong efficiency and lower operational costs for focused tasks. For example, sentiment analysis or structured data prediction may perform well with smaller machine learning models instead of complex large language models.

Do Open Source Models Work For Real World AI Applications?

Yes. Many open source models provide strong model capabilities and flexibility. Teams can fine-tune pretrained models to match specific use cases. Open source options also reduce cost constraints and allow deeper control over model behavior and deployment.

Is It Possible To Use Multiple Models In One AI System?

Yes. Many AI systems combine multiple models through hybrid architectures. A single model may handle text generation, while another model processes visual data or retrieval augmented generation. This model-based approach improves responses for complex tasks and underpins many AI-driven automation strategies in SaaS.

Does Training Data Quality Affect AI Model Selection Decisions?

Yes. Training data quality directly affects model performance, accuracy, and reliability. Poor data leads to weak model evaluation results and unreliable decision-making. High-quality data helps teams develop AI systems that deliver superior results in real-world applications.