The period from 2024 to 2026 marks a pivotal reset for SaaS companies as artificial intelligence transitions from optional add-ons to baseline capability for B2B software buyers. Market signals underscore this shift clearly. AI-native SaaS firms are achieving faster IPO trajectories, with early 2026 listings showing 25-40% higher valuations compared to traditional peers. Consolidation pressure has intensified on legacy tools without workflow intelligence, with 15-20% market share erosion reported in 2025.

Two-thirds of SaaS CEOs believe embedding AI into their product is essential to future competitiveness, yet many companies report only limited AI adoption. This gap exists because AI readiness is about practical ability to deploy and scale AI in production, not generic experimentation. Strong data foundations are now the main differentiator for success, more than model selection alone. This guide offers pragmatic strategies for SaaS founders, product leaders, and CTOs planning serious AI initiatives in 2026.

What Is AI Readiness For SaaS Products

AI readiness is the operational state where a SaaS product can reliably design, ship, and manage AI capabilities integrated into core workflows. This readiness spans multiple layers: strategic alignment with business goals, resilient data foundations for feature engineering, modular architectures separating transactional from analytical loads, security frameworks compliant with GDPR and emerging regulations, team competencies in MLOps and prompt engineering, and organizational change management to foster AI fluency.

AI readiness does not come from simply integrating a model. It requires building architecture, data flows, product strategy, and user experience that support intelligent systems without introducing fragility. Successful AI integration in SaaS involves treating AI not as a standalone feature but as an operating capability that enhances workflows and improves outcomes. Consider how HubSpot evolved from simple email suggestions to an AI-driven pipeline coach using multi-year deal history data spanning 10 million interactions. Zendesk similarly shifted to AI-driven ticket routing and resolution forecasting, achieving 40% faster resolution times. These examples show how readiness transforms static tools into intelligent systems delivering sustained value.

How To Build Strong Data Foundations For AI-Ready SaaS

Data strategy, quality, and infrastructure serve as the critical enablers for AI in SaaS products. A strong data foundation is crucial for successful AI implementation, as models inherit flaws from their inputs. Organizations with robust data setups achieve 3x higher AI project ROI according to Gartner’s 2025 survey. Yet 63% of organizations lack such practices, predicting 60% project abandonment through 2026.

Unifying Operational Data Into A Single Source Of Truth

Typical SaaS environments suffer from data silos across microservices, third-party integrations like Stripe for billing or Intercom for support, and disparate databases. PostgreSQL handles transactions while MongoDB stores logs, blocking unified customer views essential for AI initiatives.

Remediation involves a central lakehouse using Snowflake or Databricks that consolidates product events, billing, support, and CRM data with consistent entity IDs. Practices include adopting event tracking standards via RudderStack, schema governance through dbt, and canonical models resolving one-to-many relationships. AI thrives on high-quality, structured data rather than solely large volumes of unstructured data.

Designing Robust Data Quality And Governance Practices

Poor data quality erodes model accuracy by 25-50% in SaaS contexts. Data maturity involves evaluating data quality, accessibility, governance, and lineage to ensure effective AI operations.

Practical controls include data contracts via Great Expectations for validation, lineage tracking with Apache Atlas, and anomaly detection on metrics like DAU or churn signals. Access controls employ role-based permissions in tools like Immuta, field-level masking for PII, and data residency in AWS regions compliant with regulatory requirements. Data governance is essential for compliance with regulations like GDPR or HIPAA, which helps avoid costly setbacks. Most teams report that 85% of enterprise RFPs in 2026 mandate such controls.

Implementing Modern Data Infrastructure For AI Workloads

The shift from monolithic databases to modern stacks supports scalable feature computation and low-latency retrieval. AI-ready saas platforms standardize on technologies combining event streams via Kafka, columnar warehouses like BigQuery, and S3-compatible storage.

Patterns include event-driven ingestion, CDC pipelines via Debezium streaming product events to feature stores like Tecton, and transformation layers producing training datasets. This approach cuts ETL costs by 70% in mid-sized SaaS while enabling near real-time features for AI workloads.

Establishing Feature Stores And Reusable Data Products

A feature store in practical SaaS terms is a managed catalog of AI-ready variables used across multiple models and teams. Benefits include consistent feature definitions, offline and online parity for sub-100ms serving, and reduced duplication of data transformation work when built on robust AI infrastructure for intelligent applications.

Prioritize initial feature sets tied to specific use cases like lead scoring using RFM features, anomaly detection, or content personalization. Guide versioning, documentation, and access controls for features through tools like Feast and DataHub to avoid silent breaking changes.

Ensuring Tenant Isolation, Privacy, And Responsible Use

Multi-tenant SaaS faces unique challenges where training and inference must benefit from aggregate customer data without leaking tenant specifics. Patterns include per-tenant keys in Pinecone vector databases, pseudonymization through hashing user IDs, and differential privacy techniques with epsilon values around 1.0.

Organizations should create guidelines for responsible AI usage, including bias mitigation strategies and intellectual property policies. Practical guardrails for generative AI features include toxicity filters via Perspective API, content policies, and clear user messaging on data usage. This approach supports trusted customer relationships and directly influences enterprise adoption in 2026.

Creating Feedback Loops And AI Performance Monitoring

AI readiness requires continuous evaluation of model outputs, not one-time offline metrics before launch. Mechanisms include user feedback buttons, outcome tracking via Optimizely linking AI outputs to CSAT scores, and A/B testing connecting AI behavior to business results.

Set up monitoring for drift using Evidently AI with thresholds at 0.1 KL-divergence. When customer success teams flag unhelpful suggestions or see 5% user rejection rates, trigger prompt tuning cycles. To effectively integrate AI, SaaS companies must ensure their data architecture, workflows, and operating processes are designed to support continuous learning and adaptability.

Strategic Alignment Of AI Roadmap With SaaS Business Goals

Many SaaS AI projects fail because they chase novelty instead of reinforcing core business metrics. AI is increasingly viewed as operating leverage that improves outcomes, reduces friction, strengthens retention, and increases scalability and defensibility in SaaS operations, especially when teams follow an AI-driven automation roadmap for SaaS business leaders.

Identifying High Value Use Cases In Existing Workflows

Map current customer journeys using session replays via PostHog to pinpoint friction points AI can realistically improve. High-impact AI use cases often include automating repetitive tasks, such as customer support and user onboarding. AI-assisted data entry, intelligent routing of tickets, and predictive alerts for account health represent strong starting points.

Estimate impact using historical data with baseline metrics for time saved and conversion improvements. Quick win use cases that prove value in 90 days include AI routing with 25% efficiency gains.

Balancing Product Differentiation And Operational Efficiency

The trade-off between using AI for product differentiation versus internal efficiency shapes product strategy. An AI-driven planning module might command premium pricing at 20% higher ACV, while back-office automation boosts margins 5-10%.

AI can drive a higher run rate per employee, aiming for an increase in operating margin of 20 percentage points through more efficient growth rather than cuts. Sequence investments based on Rule of 40 where growth plus margin exceeds 40%.

Setting Realistic AI Adoption Milestones And KPIs

Define specific milestones for 2026: 50% of core workflows AI-enhanced and 30% MAU actively using ai features. Appropriate KPIs include time to first value under 14 days, opt-in rates at 40%, and NPS uplift of 15 points.

Companies that effectively integrate AI into their workflows can expect to see improvements in Gross Revenue Retention and Net Revenue Retention, which signal revenue durability and operational efficiency. Conduct quarterly reviews tracking AI contribution to upsell and renewal conversations.

Aligning Pricing And Packaging With AI Capabilities

Common pricing models include usage-based charges at $0.01 per query post threshold, AI add-on bundles at $20 per user monthly, or AI-first premium tiers with 30% higher ACV. Strong data foundations enable accurate cost modeling for inference at $0.001 per 1k tokens.

A comprehensive AI readiness assessment should include architecture reviews for modularity and scalability, product strategy alignment with market positioning, and UX complexity analysis that prioritizes UI/UX design services for SaaS products as a core part of AI feature adoption.

Managing Risk, Compliance, And Brand Trust Around AI

Strategy must incorporate risk evaluation for ai capabilities, especially in regulated verticals with sensitive content. Internal review processes, model cards with bias under 5%, and approval workflows protect brand trust.

Consent flows for using customer data in model training and disclaimers around AI-generated recommendations prevent escalations. Customer trust depends on proactive communication and controls, reinforced by a structured AI governance framework for SaaS platforms.

Orchestrating Cross Functional Ownership Of AI Initiatives

AI readiness shifts from individual champions to cross-functional squads with product, engineering, data, design, and go-to-market roles. A clear owner, often a product lead, maintains accountability for AI outcomes.

Decision boundaries between central platform teams handling infrastructure and domain teams customizing shared AI capabilities prevent conflicts. Executive sponsorship resolves trade-offs between near-term roadmap pressures and foundational AI investments through monthly AI council reviews.

Architectural Patterns For AI Ready SaaS Platforms

AI readiness reshapes SaaS architecture choices from API design to model deployment strategies. Most SaaS platforms struggle not due to a lack of features but because their foundational architecture cannot support growth, underscoring the value of following best practices of SaaS architecture.

Separating Transactional Workloads From Analytical And AI Layers

Mixing OLTP and heavy analytical or model workloads in the same database creates performance issues and 50% degradation risk. Standard patterns maintain a fast transactional core with replicated streams via Kafka feeding warehouses and AI systems.

Read-optimized stores and caches serve AI-enhanced experiences without overloading primary databases. Scalability is important to determine whether systems can handle the computational demands of AI without major changes, aligning with principles of scalable software architecture for high-growth products.

Adopting Event-Driven Architecture For Rich Behavioral Data

Event streams capture user actions and system events in formats ideal for AI feature creation. Publish well-structured events maintaining schemas via Confluent Schema Registry, enabling replays when models or business logic change as part of broader SaaS scalability strategies for sustainable growth.

Clickstream and workflow events train models predicting churn or suggesting next best actions. Scalability in SaaS architecture is defined by the ability to absorb complexity without requiring significant re-architecture as usage increases.

Integrating Vector Search And Retrieval Into SaaS Workflows

Vector databases and embeddings power semantic search, recommendations, and retrieval augmented generation. Examples include searching knowledge bases or product usage patterns using similarity rather than keywords, achieving 92% recall.

Design considerations include index refresh strategies, multi-tenant isolation through namespaces, and fallbacks to keyword search. Monitor hit rates targeting 80% or higher tied to customer experience metrics.

Choosing Between Off-The-Shelf Models And Custom Models

Compare managed foundation models via APIs versus training custom models on proprietary SaaS data. Trade-offs involve speed, control, cost, and embedding product-specific behavior into models.

For 2026, many SaaS teams adopt hybrid approaches using APIs like Anthropic Claude at $3 per million tokens for generics and custom fine-tuned models for 20% better domain precision. A scalable architecture must be built with modular components that allow for the integration of new capabilities, such as AI, without extensive system rewrites.

Implementing Observability For AI Enabled Architectures

Monitor not just infrastructure metrics but model health, data pipeline status, and user-level outcomes. Logs and traces should include correlation IDs tying AI decisions back to input data using OpenTelemetry.

Alert on thresholds like 5% accuracy drops or spikes in user corrections. An API-first approach allows AI agents to interact directly with software, enhancing functionality without requiring a UI.

Planning For Multi-Cloud And Vendor Resilience

Over-dependence on a single model provider or cloud service creates risk for mission-critical SaaS features. Abstraction layers for AI providers via LangChain, portable Parquet formats, and backup inference paths maintain resilience within a broader future of SaaS development in a cloud-first world.

When cost or policy changes require rapid migration, resilient data foundations and clear contracts make shifts feasible. Effective SaaS architecture aligns strategic clarity, technical foundations, user experience, and execution capacity to enable compounding growth.

Organizational Capabilities for AI-Ready SaaS Teams

Technology alone cannot deliver AI readiness. Assessing AI readiness for a SaaS company requires evaluating technical foundations and organizational culture to integrate AI successfully.

Defining Roles And Skills Across Product, Data, And Engineering

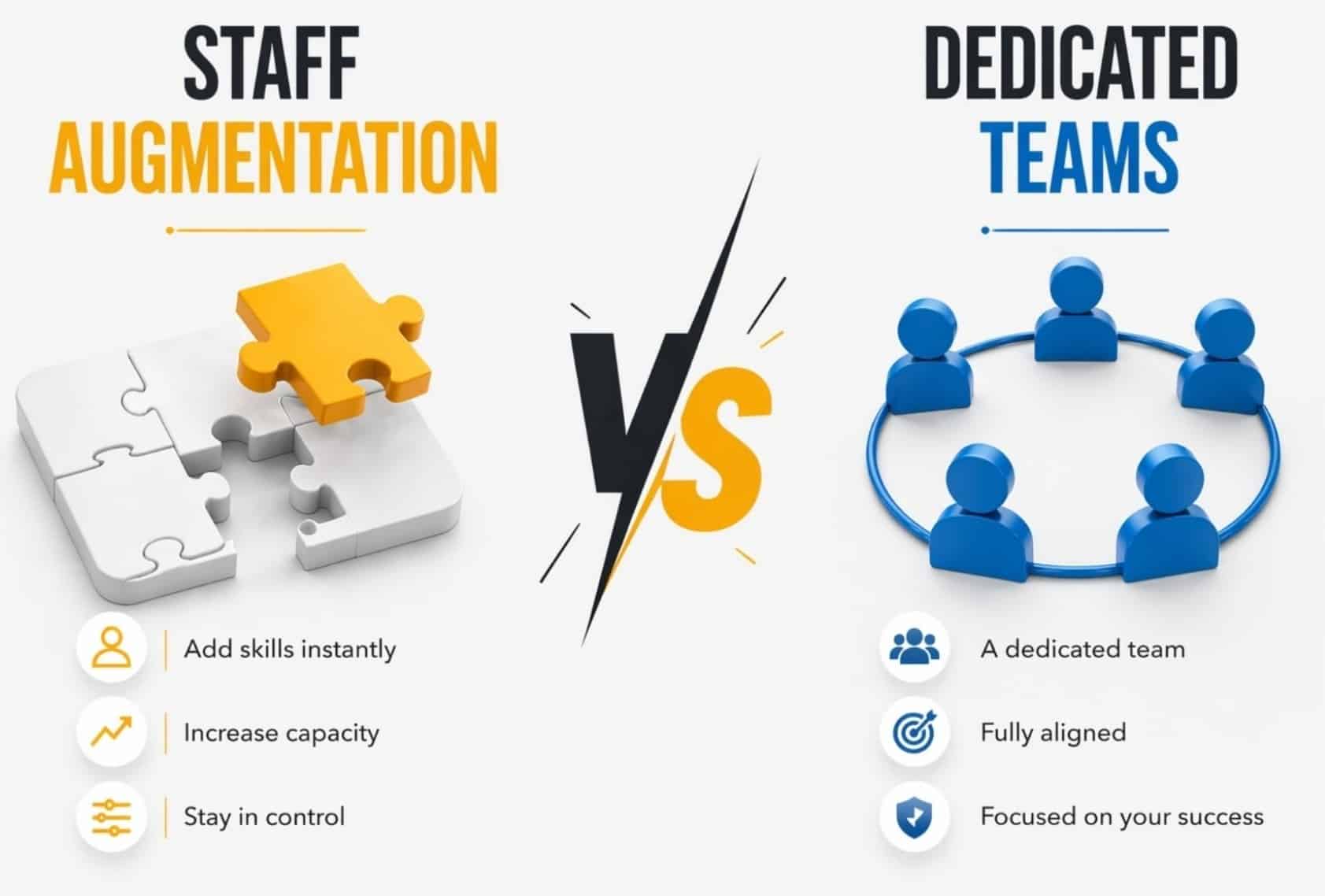

Key roles include AI product manager, data engineer, ML engineer, and analytics engineer. Small teams may combine responsibilities but need clear accountability for data quality and model performance. Upskilling existing product and engineering staff in data literacy and prompt design yields 80% productivity gains. AI integration requires a fundamental reinvention of both product strategy and company operating model, with a focus on risk, experimentation, and being product-obsessed, often supported by specialized tech consulting services that help modern businesses grow.

In software as a service companies, business leaders and saas leaders must align roles to measurable business outcomes. Avoid isolated features and technical debt from legacy systems. Focus on building systems with a clear path for AI add-ons.

Creating An Experimentation And Learning Culture

AI readiness benefits from a culture treating experiments as systematic learning with hypothesis-driven development, A/B testing via GrowthBook, and shared experiment repositories. Time-boxed experiments on copy generation, decision support routing, or recommendations with clear success criteria enable moving faster. Communicate results openly, celebrating disciplined experimentation even when experiments fail, and draw on resources like the GainHQ blog on software development and digital transformation to spread best practices internally.

Innovation requires confidence across teams despite market conditions. SaaS companies gain momentum by testing beyond isolated features. More articles and internal knowledge sharing help business leaders guide experimentation toward measurable business outcomes.

Equipping Customer Facing Teams To Explain And Support AI

Support, sales, and customer success teams must understand how AI features work. Enablement materials include internal FAQs, demo scripts, and troubleshooting guides for AI behaviors. Collect structured feedback from these teams who detect early issues with AI outputs. Strong data foundations make it possible to answer customer questions about provenance confidently.

Customer teams in software as a service environments handle sensitive content and must avoid risks from legacy systems. SaaS leaders should ensure ai add ons align with building systems that improve confidence and measurable business outcomes.

Integrating AI Metrics Into Regular Business Reviews

Leadership meetings should include concise sections on AI initiatives covering adoption, impact, and upcoming releases. Frame AI metrics within overall business performance, not as isolated technical dashboards. Track how AI-powered onboarding affects activation rates or how AI forecasting influences pipeline accuracy. Simple scorecards tracking readiness across data, infrastructure, and capabilities reinforce AI as core company capability, and should align tightly with a forward-looking SaaS product roadmap for 2026.

Business leaders track measurable business outcomes while reducing technical debt from isolated features. Clear path metrics help software as a service companies adapt to market conditions and maintain momentum with scalable building systems.

Supporting Continuous Education And Governance Processes

Internal AI workshops, playbooks, and monthly office hours keep teams aligned with evolving practices. Governance bodies review new AI features for compliance, security, and user impact before launch. Quarterly governance reviews evaluate roadmap items against data and risk standards. Lightweight but consistent governance avoids bottlenecks while providing oversight and should reflect the broader role of AI in SaaS, its benefits, challenges, and future trends.

Governance protects sensitive content while enabling innovation in software as a service companies. SaaS leaders ensure ai add ons do not increase technical debt from legacy systems, maintaining confidence and measurable business outcomes across teams.

Partnering And Buying Versus Building AI Capabilities

Criteria for build versus buy depend on maintaining internal ownership of data models, governance, and core intellectual property. Partnering accelerates time to market for vectors via Pinecone or managed model hosting. Forward-looking SaaS companies are focusing on becoming native-AI platforms, prioritizing data as a product. Contractual protections around data usage, portability, and service continuity remain essential in 2026 vendor agreements, especially when following a structured guide to integrating AI into SaaS products.

Companies must evaluate building systems versus vendors based on market conditions and momentum. Business leaders choose partners that reduce technical debt, avoid isolated features, and create a clear path toward measurable business outcomes, whether through smooth cloud migration planning for growing teams or by deciding between a replatform vs rebuild strategy for long-term platform growth.

How GainHQ Helps SaaS Teams Build AI-Ready Products

We position GainHQ as a strategic partner for SaaS firms constructing AI-ready products, emphasizing data foundations, architecture, and strategy from inception. Our engagements commence with assessments scoring data maturity, systems composability, and team capabilities, delivering 12-month roadmaps aligned with business outcomes, supported by our broader custom software development services for scalable SaaS products.

Preparing a SaaS business for AI integration requires transformations in data infrastructure, team skill sets, and an outcome-driven strategy, including a clear LLM integration strategy for SaaS platforms in 2026. In one engagement, a $10M ARR CRM unified data silos via lakehouse architecture, launching pipeline AI that boosted NRR by 12%. Another support SaaS refined features for custom models, achieving 45% automation in 9 months while maintaining customer trust, similar to our AI features case study that increased engagement by 34%.

Frequently Asked Questions

How Long Does It Typically Take A SaaS Company To Reach Practical AI Readiness?

Realistic timelines range from six to eighteen months depending on current data maturity, architecture, and team experience. Early wins appear in the first three months through targeted use cases like AI search, while deep readiness requires sustained investment. Parallel efforts on data foundations and pilot use cases help shorten overall timelines to 12 months for mature firms.

What Is A Sensible Initial Budget For AI Readiness Work In A Growth Stage SaaS?

Initial budgets typically allocate 10-20% of engineering and product spend, translating to $500k-$2M for companies at $50M ARR. Costs center on data pipelines modernization and cross-functional teams focused on AI experiments. Clear success metrics showing 20% ROI help justify incremental budget as AI projects demonstrate impact on revenue growth and margins.

Can A SaaS Company Rely Entirely On External AI Providers And Still Be Considered AI Ready?

Using external models is common, but AI readiness depends on internal control of data foundations, governance, and product integration. Pure reliance on external providers risks 30% higher failure rates. Balance external providers for generic capabilities with internal systems handling domain-specific intelligence and monitoring, guided by an AI model selection framework for startups and teams in 2026.

How Should A SaaS Startup With Limited Data Approach AI Readiness?

Design data collection intentionally from day one and focus on AI features that leverage behavioral signals early. In 2026, AI enables context-aware, behavior-driven experiences in SaaS applications, significantly enhancing user engagement and retention. Start small with external models and strong instrumentation, building proprietary datasets over one to two years as usage accumulates, taking cues from proven case studies of successful SaaS launch stories.

What Are Common Warning Signs That A SaaS Platform Is Not AI Ready Yet?

Warning signs include scattered data across six or more tools, inconsistent IDs with 20% match rates, manual weekly reporting, and API instability below 99% uptime. No clear ownership of data or AI initiatives signals fundamental gaps. Recognizing these issues early allows teams to prioritize foundational work and add ai capabilities systematically rather than attempting complex features prematurely.